The rise of bots as social media influencers

Does automation in social media affect your organisation? If you’re in government, business, education or politics, the answer might surprise you.

Does automation in social media affect your organisation? If you’re in government, business, education or politics, the answer might surprise you.

Does automation in social media affect your organisation? If you’re in government, business, education or politics, the answer might surprise you. Here’s how to manage the negative impact of bots, AI and automated moderation.

Automation in the form of ‘chat bots’ has been with us since the beginning of our current information age. One of the first bots was created in the 1960s, and was named ELIZA. Even though Eliza’s creator, and AI pioneer Joseph Weizenbaum considered Eliza to be more joke than serious computing innovation, many helpful bots have been created since those early days. However there are also malicious bots. Some of these are automated or semi-automated accounts that spread conspiracy theories or disinformation.

For most of us, the goal of automation is to increase efficiency and decrease human labour. Social media platforms such as Twitter and Facebook can struggle to detect and remove malicious bots while using automation to improve our experience of the medium.

Should you be automating your own social media accounts? Dr Tobias Keller, a member of a QUT research team studying the influence of bots in social media says it can be difficult for automated communicators such as bots to maintain a balance between efficiency and maintaining a good relationship with customers. He also makes the point that each platform has clear guidelines about whether (and how) the use of automation or bots is permitted.

“As human beings, we want to receive helpful and efficient solutions. If I have a bad experience with a product and want to complain, I want to be heard. If I receive only automated messages from a bot that don’t solve my issue, this kind of interaction with a firm would anger me even more.”

The take away message? Stay actively engaged with your social media accounts. Even if you’ve automated or semi-automated posting, human oversight of your online conversations is essential. Keep a true record (i.e. an archive) of what you’ve posted so that you are able to track and resolve miscommunication issues that arise from reliable data.

In March this year, Facebook, Twitter and YouTube announced separately that they would be increasing automated moderation on their platforms. A number of factors seem to have influenced this decision, including an increase in the sophistication of AI and wanting to decrease the stress and impact on human moderators of checking through millions of flagged images, video and text. Covid-19 also influenced the timing of this increased use of AI, as remote working became a reality for many organisations.

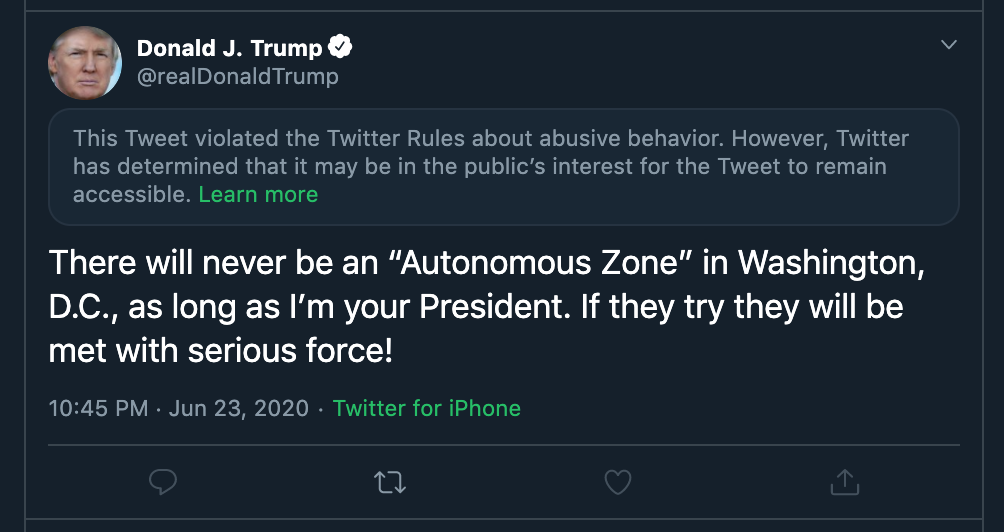

While a high profile social media identity such as US President @DonaldJTrump might appear in the news for having his Twitter moderated, other voices are also having posts hidden and deleted in ways that could be seen as a #fail. One extreme case we read about recently was that people reporting war crimes were seeing their posts moderated by AI. These posts were not being hidden or deleted by human moderators before. Some reporters are expressing concerns that this increased automation of moderation tasks means messages that could save lives are no longer being heard.

The take away message? Take control of your own social media records. If you find your account being moderated incorrectly, you’ll want to be able to retain evidence – and the original records. This can be achieved by using an effective social media archiving tool like Brolly.

We asked the QUT data science team what social media managers and information managers should watch out for, in the face of an increased use of bots and automation.

If you’re considering setting up a bot, Dr Tobias Keller cited a 2015 Sri Lankan example of the possible dangers: “A Twitter bot was set up to retweet a hashtag that would increase awareness of Sri Lanka’s touristic highlights. However, during the presidential election, certain politicians started to use the same hashtag and the bot automatically retweeted Tweets by those politicians. Although this bot had no political purpose in the beginning, it ended up amplifying their political ideas.”

Let’s leave aside for a moment the problem that the original bot may have contravened Twitter’s policies. Even if you are not using bots to automatically retweet, it’s important to manage the #hashtags you use to be sure they have not been adopted by others whose values or purpose you do not share.

The take away message?: Be aware of current conversations and trends so that you are able to adjust your use of specific hashtags, and avoid accidentally amplifying messages that you don’t want to be seen to agree with. And if you have an active bot, be aware of its limitations and make sure you are following the rules of the platform/s you’re using.

Keller believes that we share the responsibility for keeping social media safe. “Platforms have a responsibility to provide a healthy environment and every user should make use of the ‘report a user’ or ‘report a message’ functions on these platforms to help out. Organisations need to be aware of the potential dangers of their own automated communications so they can intervene if something goes south.”

We’d also add that even if you’re not using automated bots, you need to be vigilant. It’s like driving a car. You have to be a good driver but you also need to practice defensive driving, to protect yourself from incidents that can impact you, even though you didn’t cause them.

Thank you to Dr Timothy Graham, Dr Tobias R. Keller, Professor Axel Bruns and Associate Professor Dan Angus at QUT Digital Media Research Centre for their assistance in drafting and reviewing this article.